Demo Videos

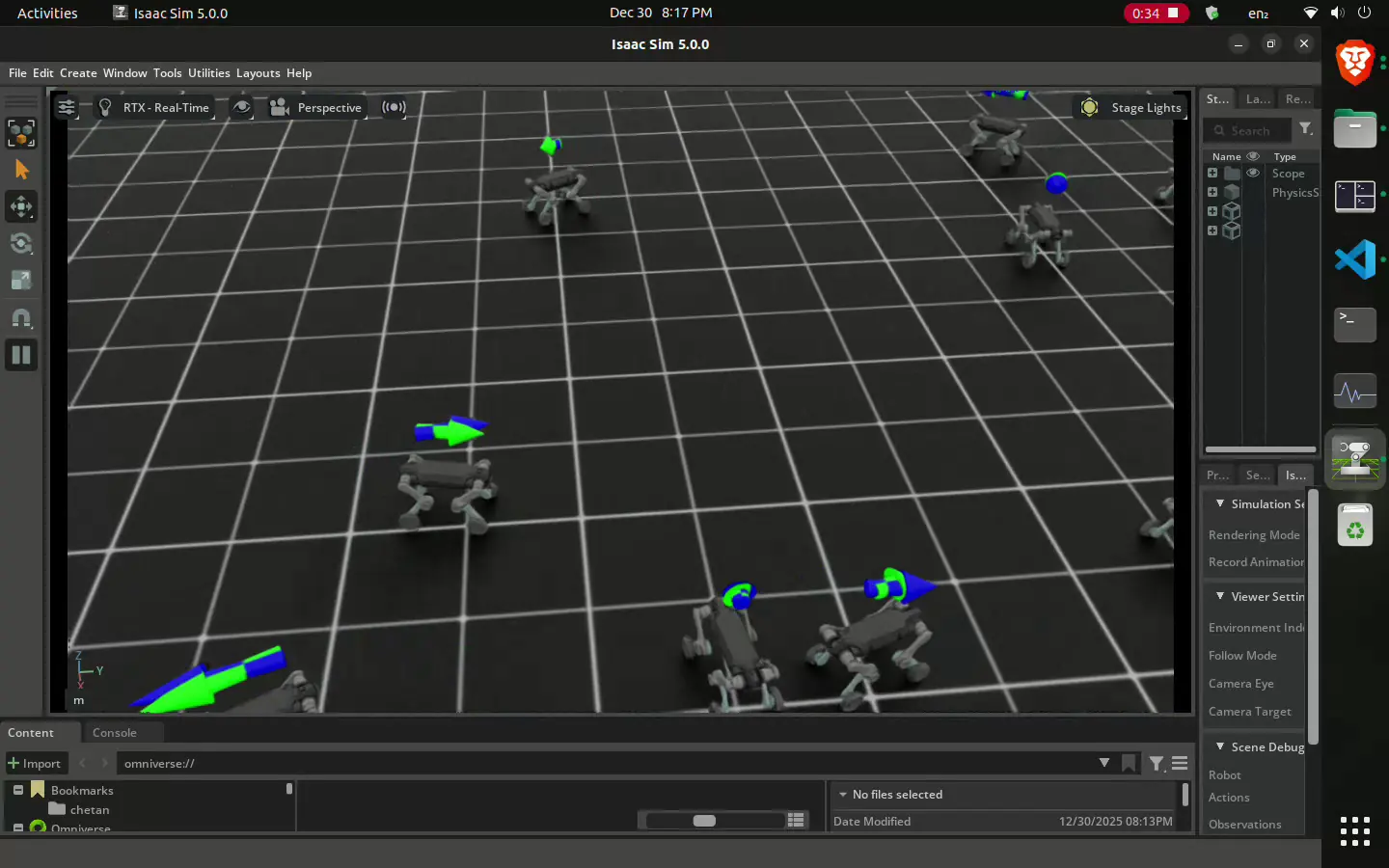

Quadruped Locomotion

Designing a locomotion policy for a custom 12-DOF quadruped robot. The agent has successfully learned fundamental gait mechanics and balance recovery. Current development focuses on refining reward functions to minimize foot slip and improve gait symmetry, bridging the gap between simulation stability and realistic movement.

Just fine-tuning the parameters

Goal: Walking | Result: Learned to Stand

Moving with sliders

Rough terrain policy

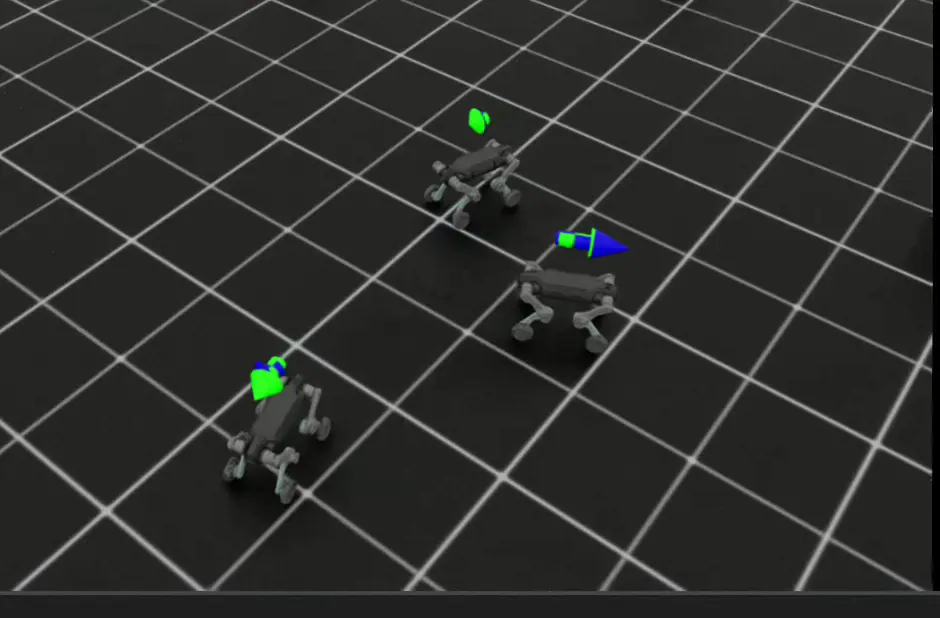

Deep Robotics Lynx M20 – Wheeled Navigation via Reinforcement Learning

Implemented car-like wheeled navigation for the Deep Robotics Lynx M20 using reinforcement learning. The robot URDF was adapted by fixing all non-driving joints, enabling pure wheel-based motion. The policy learns steering and velocity control for stable ground-vehicle-style navigation. Ongoing work focuses on reducing wheel slip and improving trajectory smoothness across varying terrain friction.

Learning steering and velocity control

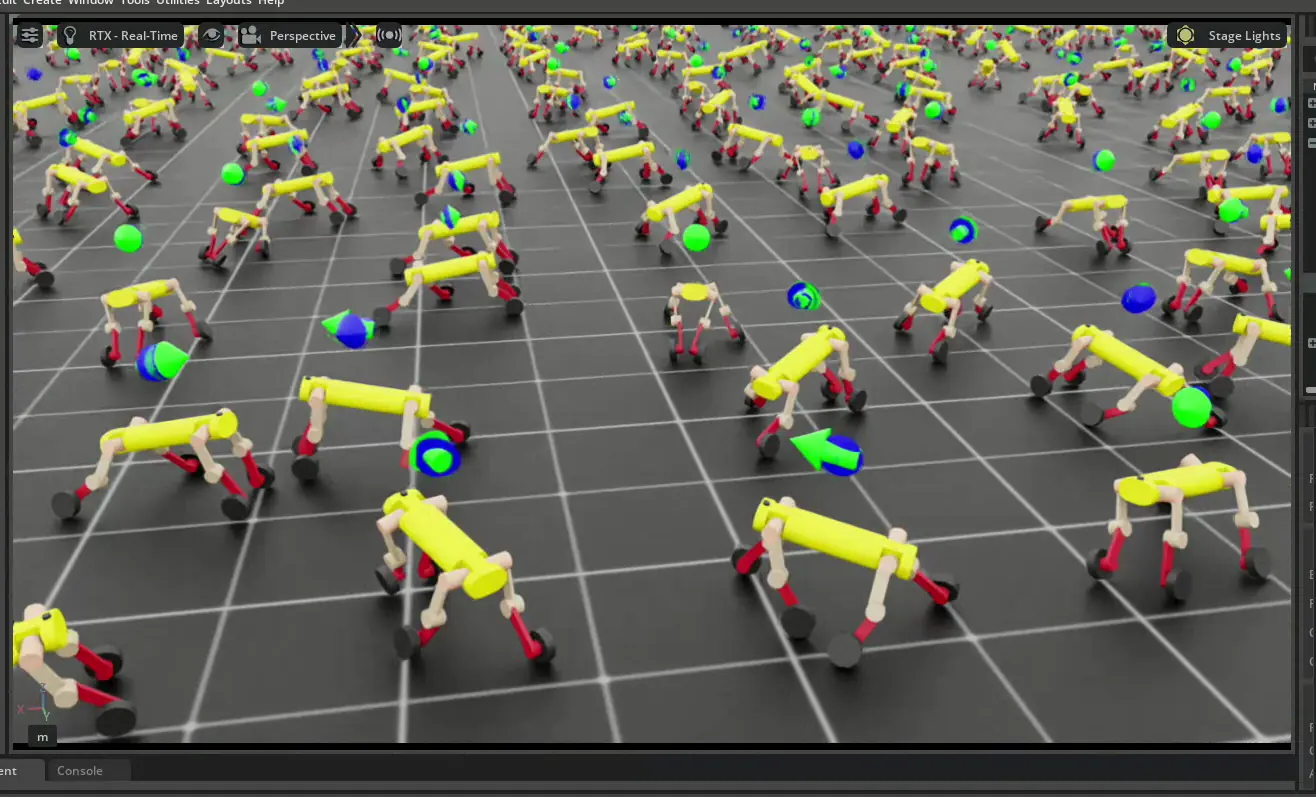

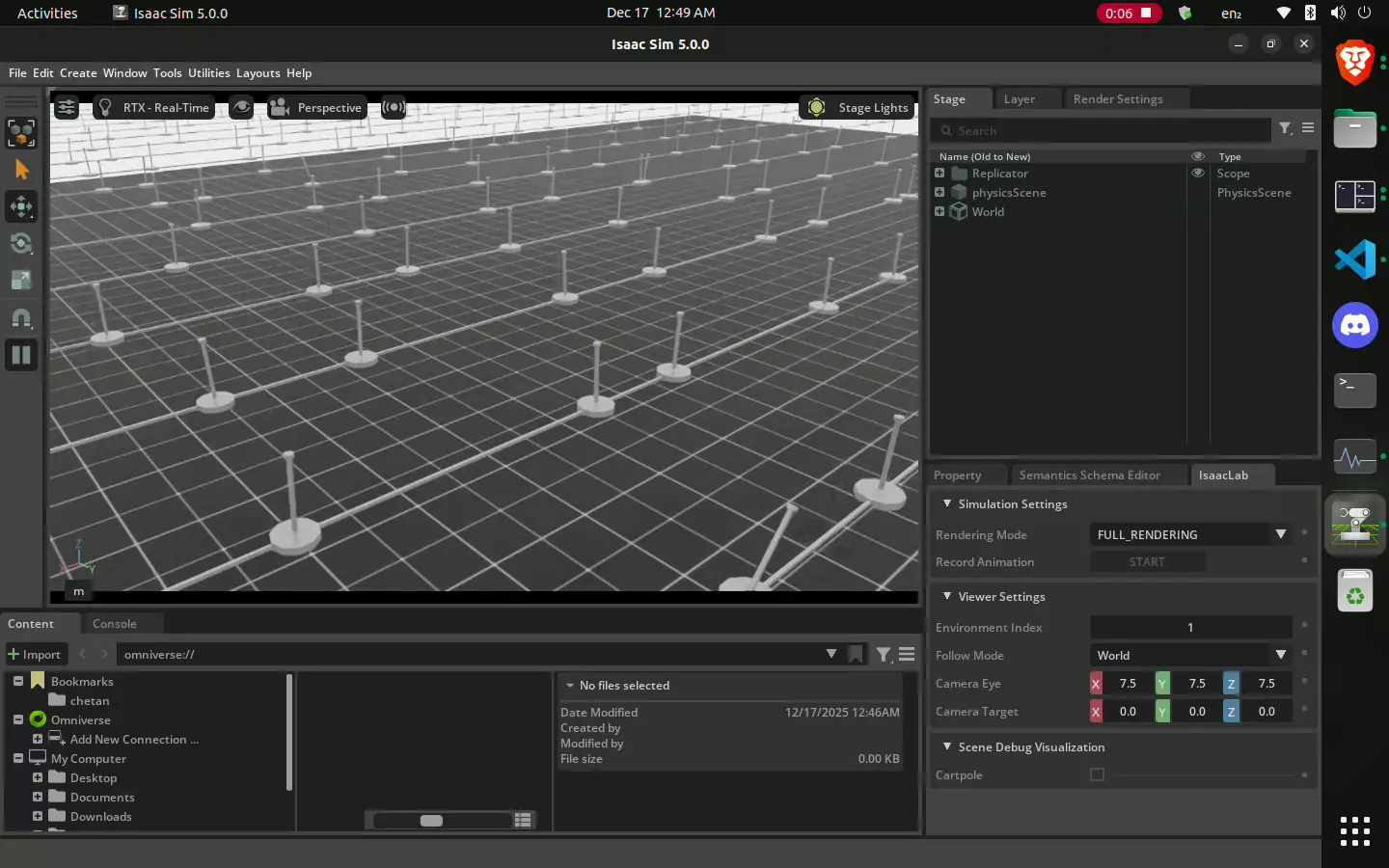

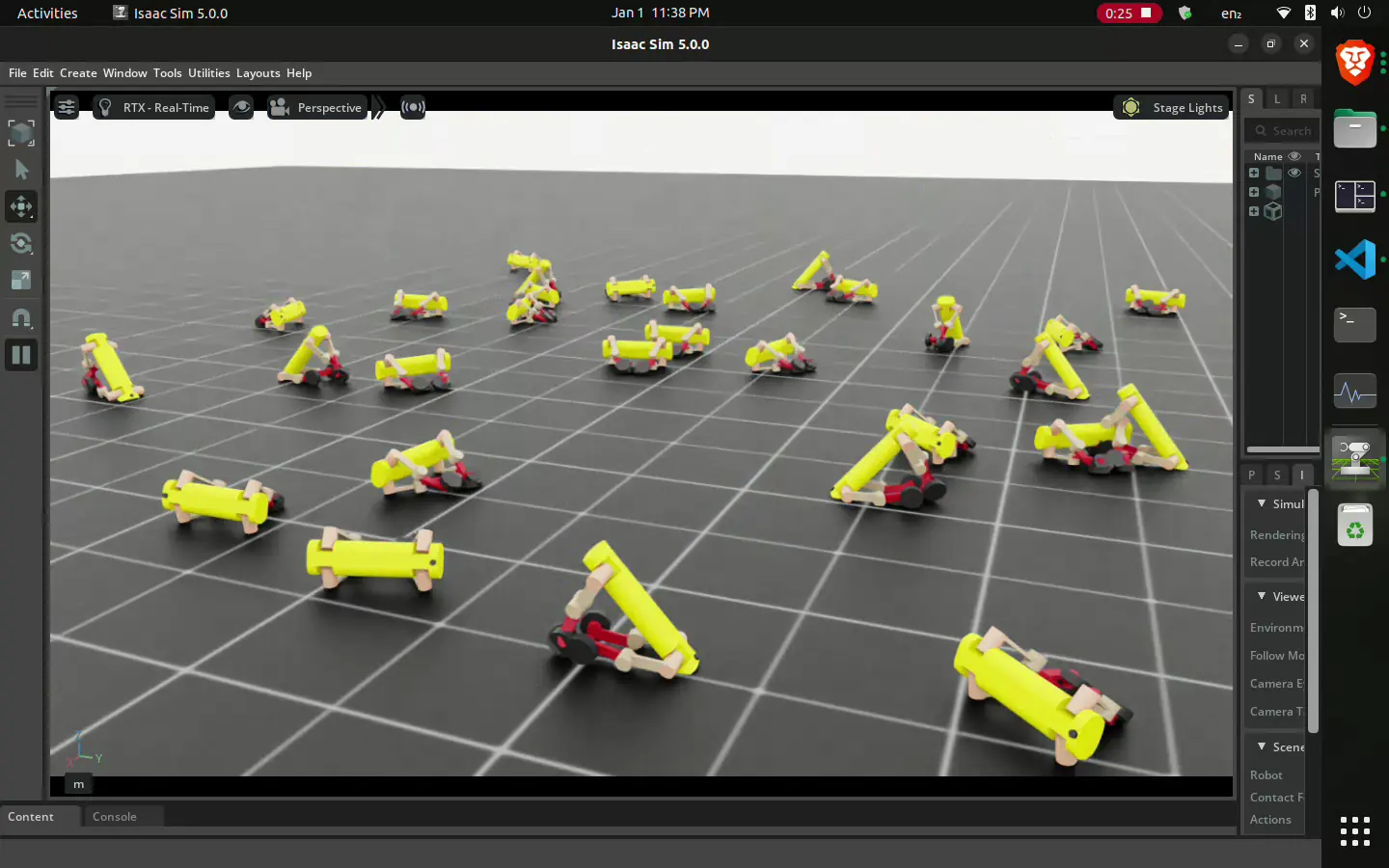

Custom Rotary CartPole (Non-Linear Control)

Extended the RL framework to handle rotary mechanics, addressing higher-complexity continuous control challenges. The agent was trained to perform swing-up and balance maneuvers. This project validated the adaptability of the PPO algorithm to non-linear dynamics, achieving full training in approximately 2 minutes on 50,000 parallel environments.

Training Phase:

Testing Model:

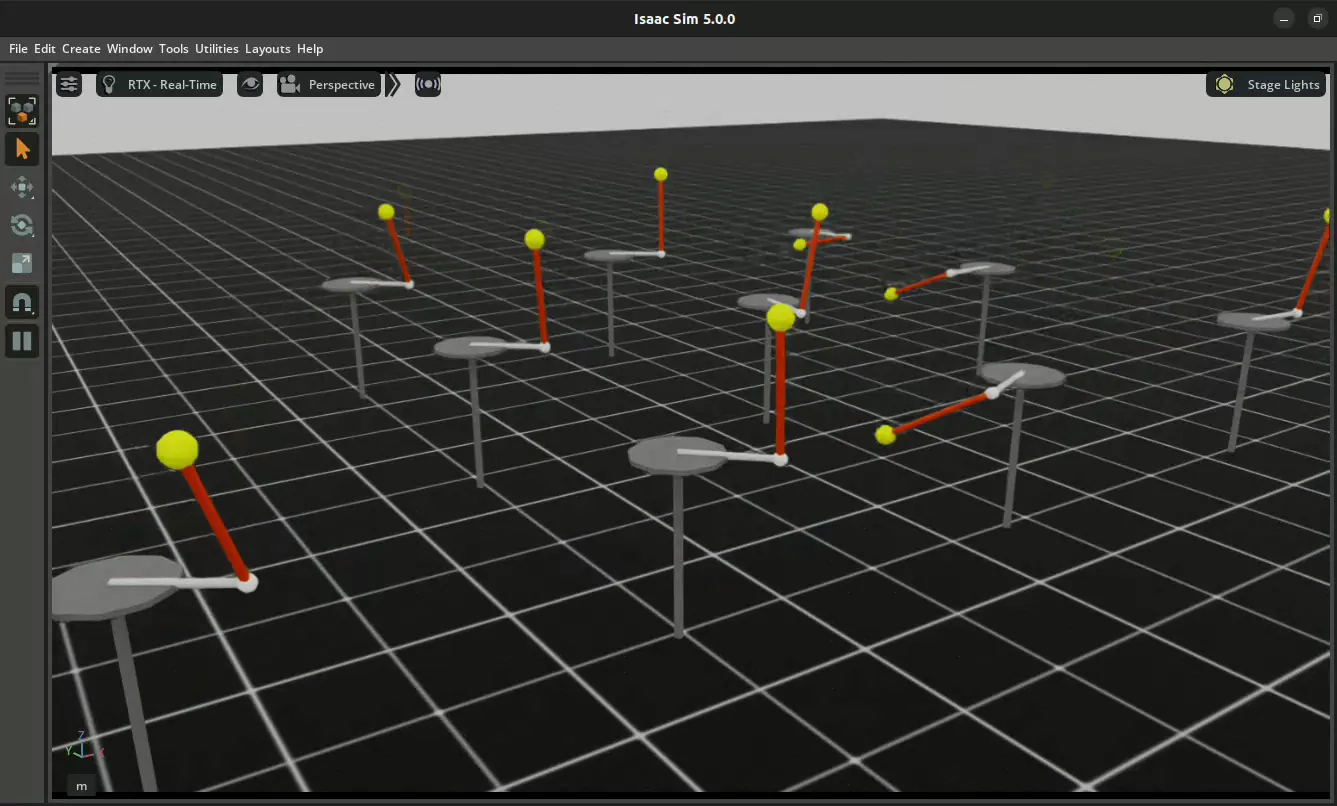

Custom Linear CartPole (Training & Test)

Developed a custom Linear CartPole environment to establish a robust RL pipeline. Utilizing the Proximal Policy Optimization (PPO) algorithm and a Manager-Based RL architecture, the agent learned to balance the pole efficiently. By leveraging massive parallelization (50,000 environments) on an RTX 5060 Ti, training convergence was achieved in under 2 minutes.

Deployment / Testing Model: