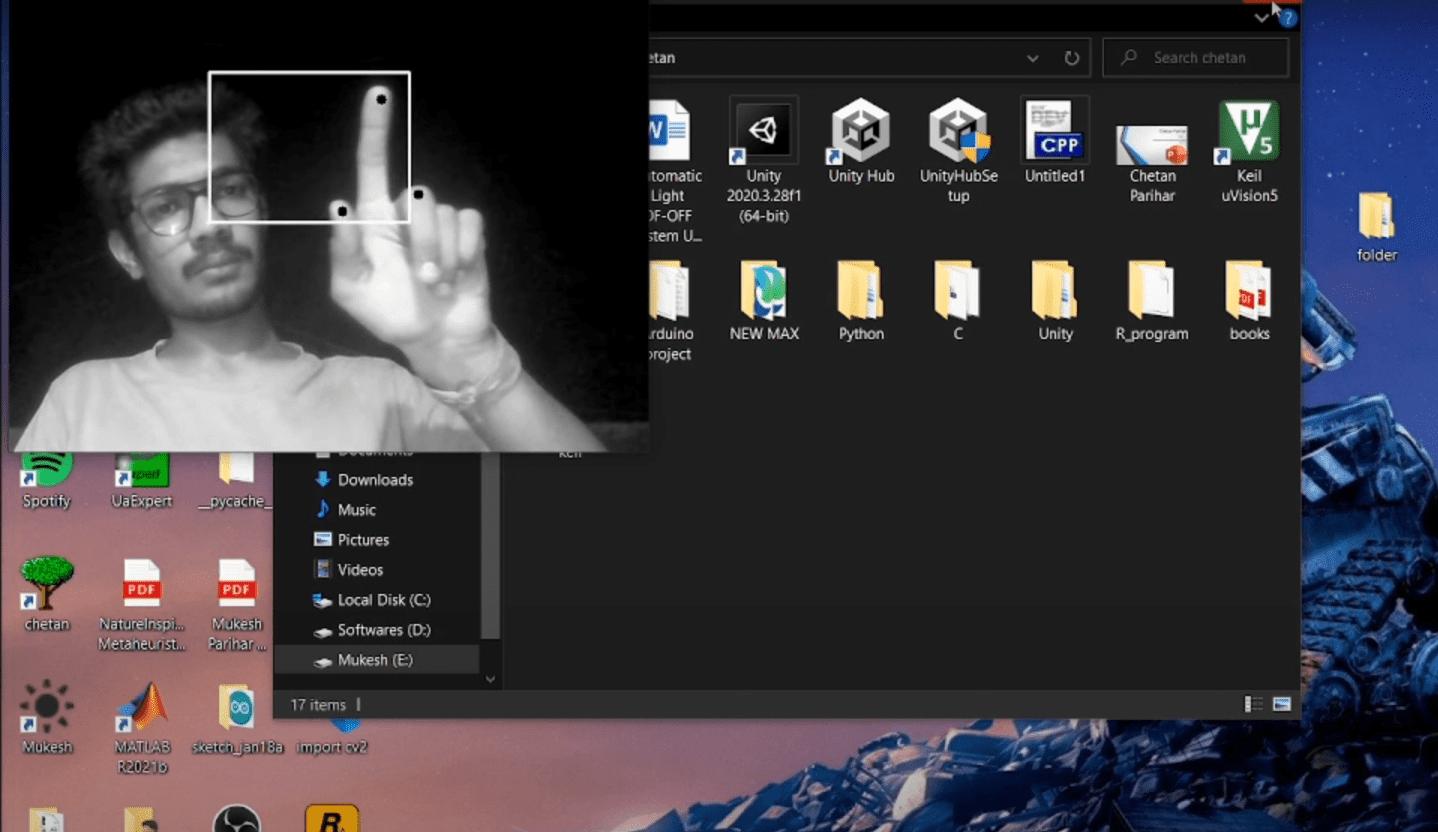

Project Demo

Project Details

This project was developed with the objective of controlling the mouse pointer using hand gestures. By utilizing fingertip movements, users can manipulate the mouse pointer's position and perform click actions without physically touching a peripheral device.

How it Works

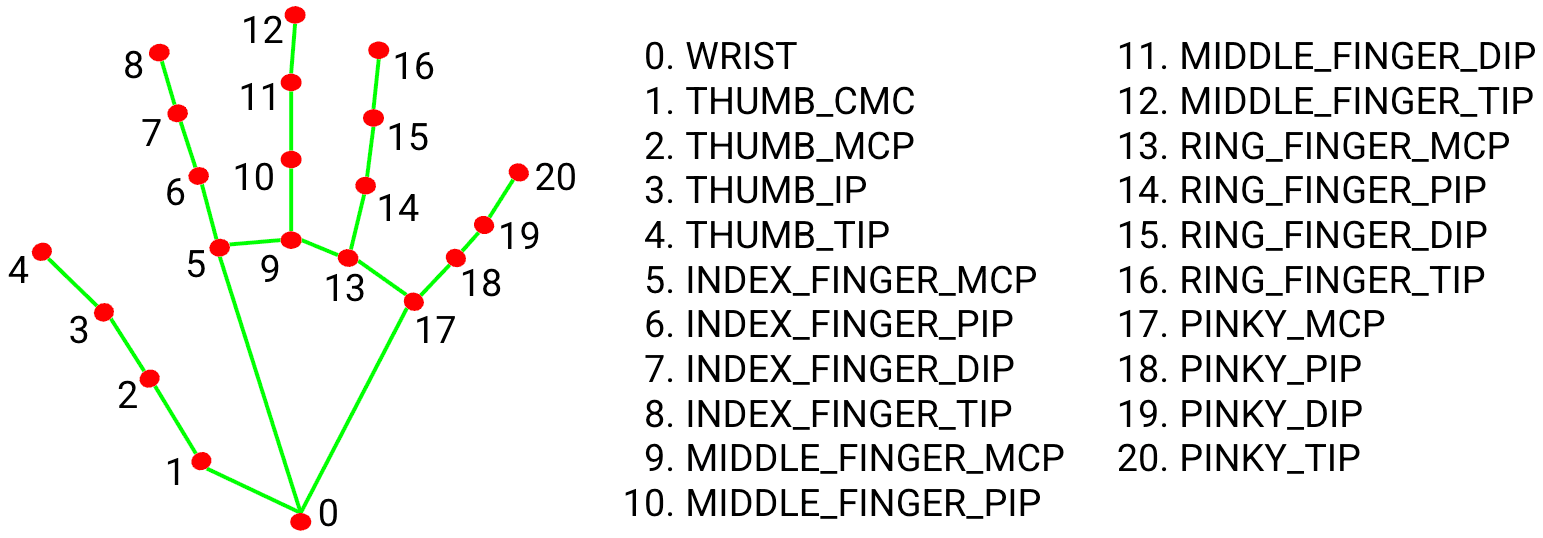

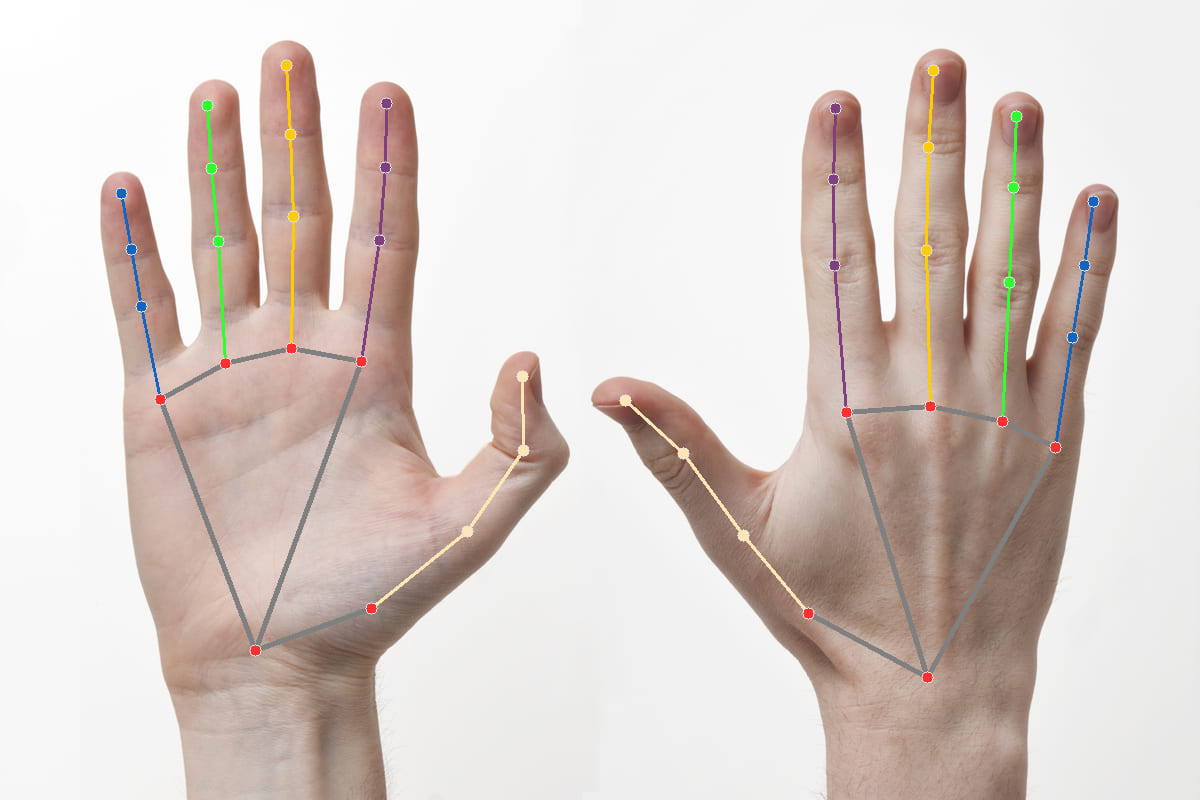

The system captures live video of the user's hand gestures and responds accordingly. One of the key components of this project is the MediaPipe library, which provides a robust solution for hand tracking. MediaPipe offers pre-trained hand landmark models (open-sourced by Google) that accurately detect and track 21 3D landmarks on the hand.

Key Technologies

- OpenCV: Used for all input operations, enabling efficient video processing and frame analysis.

- MediaPipe: Handles the complex task of real-time hand detection and landmark tracking.

- PyAutoGUI: Maps the tracked coordinates to the OS cursor, facilitating movement and click events.

The project offers a hands-free approach to computer interaction, adding convenience and a futuristic element to the user experience.